1. Tasks and Threads

When a task begins to run, the applicable task scheduler invokes the task’s user delegate in a thread of its choosing.

The task will not migrate

among threads at run time. This is a useful guarantee because it lets

you use thread-affine abstractions, such as the Microsoft Windows®

operating system’s critical sections, without having to worry, for

example, that the Monitor.Enter function will be executed in a different thread than the Monitor.Exit function.

2. Task Life Cycle

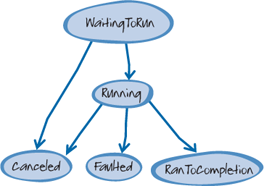

The Status property of a Task instance tracks its life cycle. Figure 1 shows the life cycle of the tasks :

The TaskFactory.StartNew method creates and schedules a new task, which results in the status TaskStatus.WaitingToRun. Eventually, the TaskScheduler

instance that is responsible for managing the task begins to execute

the task’s user delegate on a thread of its choosing. At this point,

the task’s status transitions to TaskStatus. Running.

After the task begins to run, it has three possible outcomes. If the

task’s user delegate exits normally, the task’s status transitions to TaskStatus.RanToCompletion. If the task’s user delegate throws an unhandled exception, the task’s status becomes Task.Faulted.

It’s also possible for a task to end in TaskStatus.Canceled. For this to occur, you must pass a CancellationToken as an argument to the factory method that created the task. If that token signals a request for cancellation before the task begins to execute, the task won’t be allowed to run. The task’s Status property will transition directly to TaskStatus.Canceled without ever invoking the task’s user delegate. If the token signals a cancellation request after the task begins to execute, the task’s Status property will only transition to TaskStatus.Canceled if the user delegate throws an Operation CanceledException and that exception’s CancellationToken property contains the token that was given when the task was created.

There is one more task status, TaskStatus.Created. This is the status of a task immediately after it’s created by the Task class’s constructor; however, it’s recommended that you use a factory method to create tasks instead of the new operator.

3. Writing a Custom Task Scheduler

The .NET Framework includes

two task scheduler implementations: the default task scheduler and a

task scheduler that runs tasks on a target synchronization context. If

these task schedulers don’t meet your application’s needs, it’s possible to implement a custom task scheduler.

There are a number of

advanced scenarios where creating a custom task scheduler is relevant.

For example, you could implement a custom task scheduler if you wanted

to enable a single maximum degree of parallelism across multiple loops

and tasks instead of across a single loop. You could also create a

custom task scheduler if you want to implement alternative scheduling

algorithms, for example, to ensure fairness among batches of work.

Finally, you can implement a custom task scheduler if you want to use a

specific set of threads, such as Single Thread Apartment (STA) threads,

instead of thread pool worker threads.

You can see how to create a

custom task scheduler by looking at the ParallelExtensionsExtras

project that is part of the Microsoft Parallel Samples package. This package includes an implementation of a QueuedTaskScheduler class that provides multiple global task queues that use round-robin scheduling. A custom

scheduler doesn’t need to use its own threads; it can simply limit the

number of tasks that are allowed to run concurrently but still use the

thread pool. This is the approach taken by the LimitedConcurrencyLevelTaskScheduler class in the Parallel ExtensionsExtras project.

3.5.4. Unobserved Task Exceptions

If you don’t give a faulted task the opportunity to propagate its exceptions (for example, by calling the Wait

method), the runtime will escalate the task’s unobserved exceptions

according to the current .NET exception policy when the task is

garbage-collected. Unobserved task exceptions will eventually be

observed in the finalizer thread context. The finalizer thread is the

system thread that invokes the Finalize method of objects that are ready to be garbage-collected. If an unhandled exception is thrown during the execution of a Finalize

method, the runtime will, by default, terminate the current process,

and no active try/finally blocks or additional finalizers will be

executed, including finalizers that release handles to unmanaged

resources. To prevent this from happening, you should be very careful

that your application never leaks unobserved task exceptions. You can

also elect to receive notification of any unobserved task exceptions by

subscribing to the UnobservedTaskException event of the Task-Scheduler class and choose to handle them as they propagate into the finalizer context.

This last technique can be

useful in scenarios such as hosting untrusted plug-ins that have benign

exceptions that would be cumbersome to observe.

During finalization, tasks that have a Status property of Faulted are treated differently from tasks with the status Canceled.

The task’s status determines how unobserved task exceptions that arise

from task cancellation are treated during finalization. If the

cancellation token that was passed as an argument to the StartNew method is the same token as the one embedded in the unobserved Operation CanceledException instance, the task does not propagate the operation-canceled exception to the UnobservedTaskException

event or to the finalizer thread context.

5. Relationship Between Data Parallelism and Task Parallelism

The Parallel.Invoke method was described as creating tasks for each of the delegates passed in its params

array. As a mental model, this is fine. In practice, this isn’t always

how it’s actually executed. For example, with enough delegates in the params list, TPL may, for performance reasons, use a parallel loop to invoke all the delegates.

This highlights how

task parallelism and data parallelism are related. If you represent

each operation to be performed as a delegate, you can then use data

parallelism to perform the same operation (invoking the delegate) on

each piece of data (the delegate). Conversely, if you have a data

parallel problem, you can approach it in a task-parallel manner by

spinning up a task to process each individual operation.